Digital Healthcare – Market, projections, and trends

Note to Readers (2024 Update)

This white paper, originally published in 2020, explores key projections, market dynamics, and trends in digital healthcare. Since its publication, the field has evolved significantly, and the accompanying detailed report has been updated to reflect the state of digital healthcare in 2024. For the most current insights and analysis, please refer to the updated report (also linked at the end of this blog).

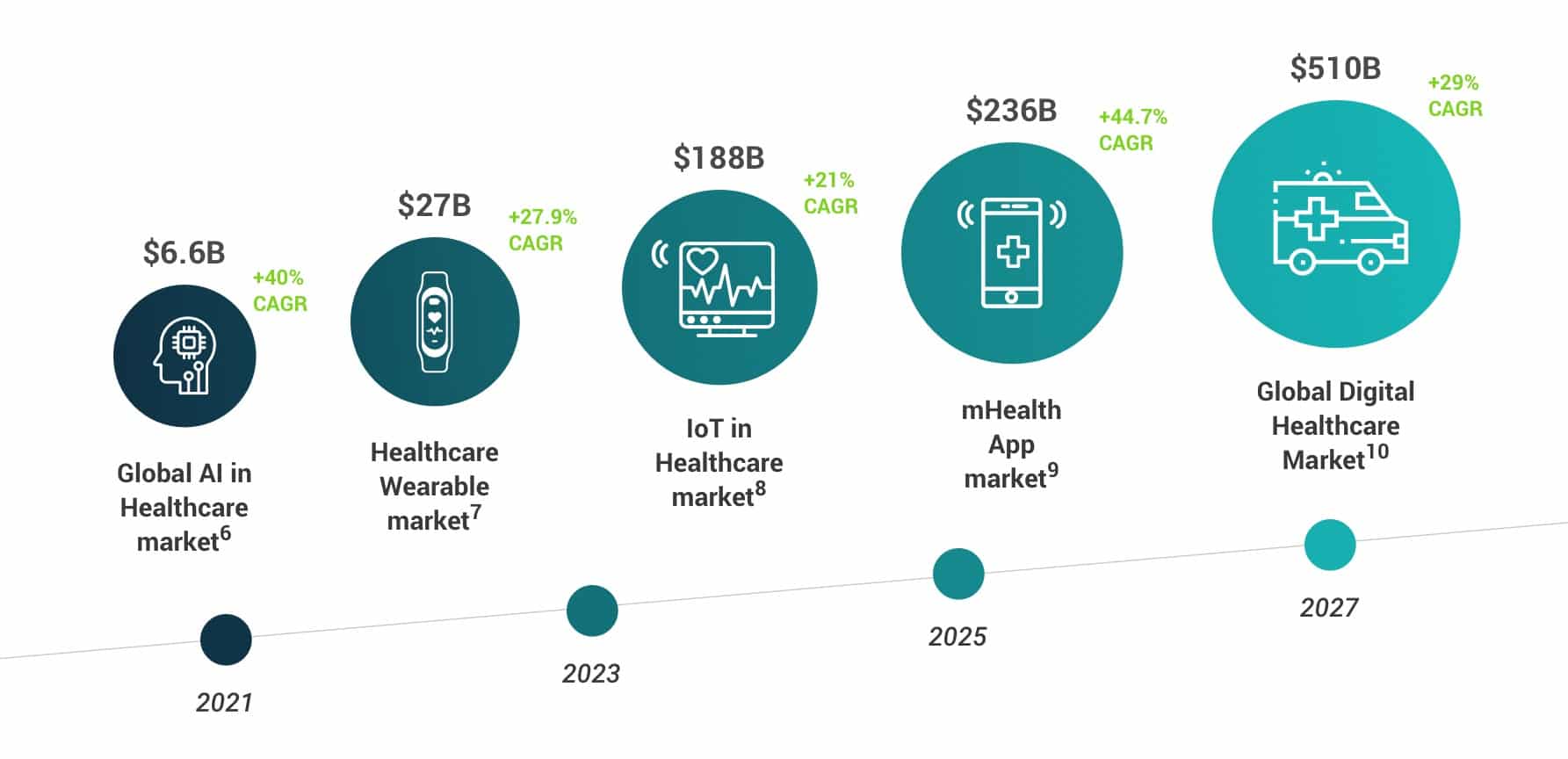

If you work in the healthcare industry, you are likely familiar with some uses of IoT devices. According to Gartner (2020), 79% of healthcare providers are already successfully employing IoT solutions.[1] However, this is just the beginning. While before COVID-19, the growth of digital health adoption had stalled [2], the market is picking up speed again. Indeed, Q3 2020 was a record year for investments in healthcare companies [3] and the market expects rising investments in healthtech for next years [4]. Today, underutilized data plays a major role in healthtech innovation [17] and the growing importance of healthcare data for future offerings is evident [5]. Take a look how analyts from Gartner to Accenture and Forrester expect the market to grow:

The digital healthcare market 2020 and beyond

- Analysts expect Artificial Intelligence in healthcare to reach $6.6 billion by 2021 (with a 40% CAGR). [6]

- The Internet of Medical Things (IoMT) market is expected to cross $136 billion by 2021. [11]

- Analysts expect the healthcare wearable market to have a market volume of $27 billion by 2023 (with a 27.9% CAGR). [7]

- The IoT industry is projected to be worth $6.2 trillion by 2025 and around 30% of that market (or about $167 billion) will come from healthcare. [8]

- Analysts expect the global Medical Health Apps market to grow to $236 billion by 2026, reflecting a shift towards value based care. [9]

- The projected global digital health market is estimated to reach $510.4 billion by 2026 (with a 29% CAGR). [10]

The Healthcare industry has been struggling with shrinking payments and cost optimizations for years. [18] Fueled by the need to adopt in light of the COVID pandemic, digital technologies bring extensive changes quickly to this struggling industry now. Data is moving to the center of this changing ecosystem and harbors both risks and opportunities in a new dimension. [21] The basic architecture and infrastructure to have the data reliably, securely and quickly available where they are needed will be decisive for the success or failure of digital healthcare solutions. [17] [21]

We recommend keeping an eye on the following five trends

The 5 biggest digital healthcare trends to watch

Artificial Intelligence (AI)

Accenture estimates that AI applications can help save up to $150 billion annually for the US healthcare economy by 2026. [6] Therefore, it is no wonder that the healthcare sector is expected to be among the top five industries investing in AI in the next couple of years. [19] The top three greatest near-term value AI applications in healthcare are: 1. robot-assisted surgery ($40 billion), 2. virtual nursing assistants ($20 billion), and 3. administrative workflow assistance ($18 billion).

Big Data / Analytics

The goal of big data analytic solutions is to improve the quality of patient care and the overall healthcare ecosystem. The global healthcare Big Data Analytics market is predicted to reach $39 billion by 2025. [12] The main areas of growth are medical data generation in the form of Electronic Health Records (EHR), biometric data, sensors data.

Internet of Medical Things (IoMT)

IoMT is expected to grow to $508.8 billion by 2027. [13] According to Gartner, 79% of healthcare providers are already using IoT in their processes. [27] During COVID, IoMT devices have been used to increase safety and efficiency in healthcare, i.e. providing and automating clinical assistance and treatment to the infected patient, to lessen the burden of specialists. Future applications, like augmented reality glasses that assist during surgery, are leading to a focus more on IoMT-centric investments. [14]

Telehealth / Telemedicine

Telecommunications technology enables doctors to diagnose and treat patients remotely. Consumer adoption of telehealth has skyrocketed in 2020 and McKinsey believes that up to $250 billion of current US healthcare spend could potentially be virtualized. [25] Also, many patients view telehealth offerings more favorable and – having made good experiences – are planning to continue using telehealth in the future. [26] Not astonishingly, telemedicine stocks also grow rapidly. [14]

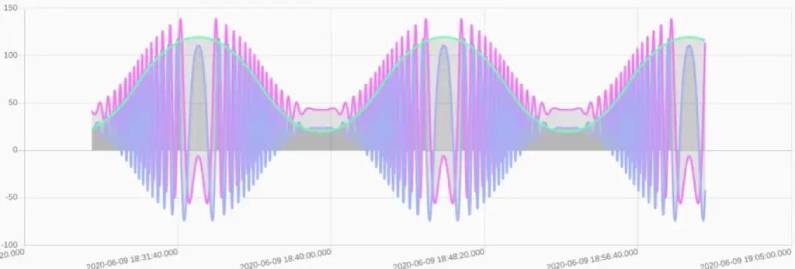

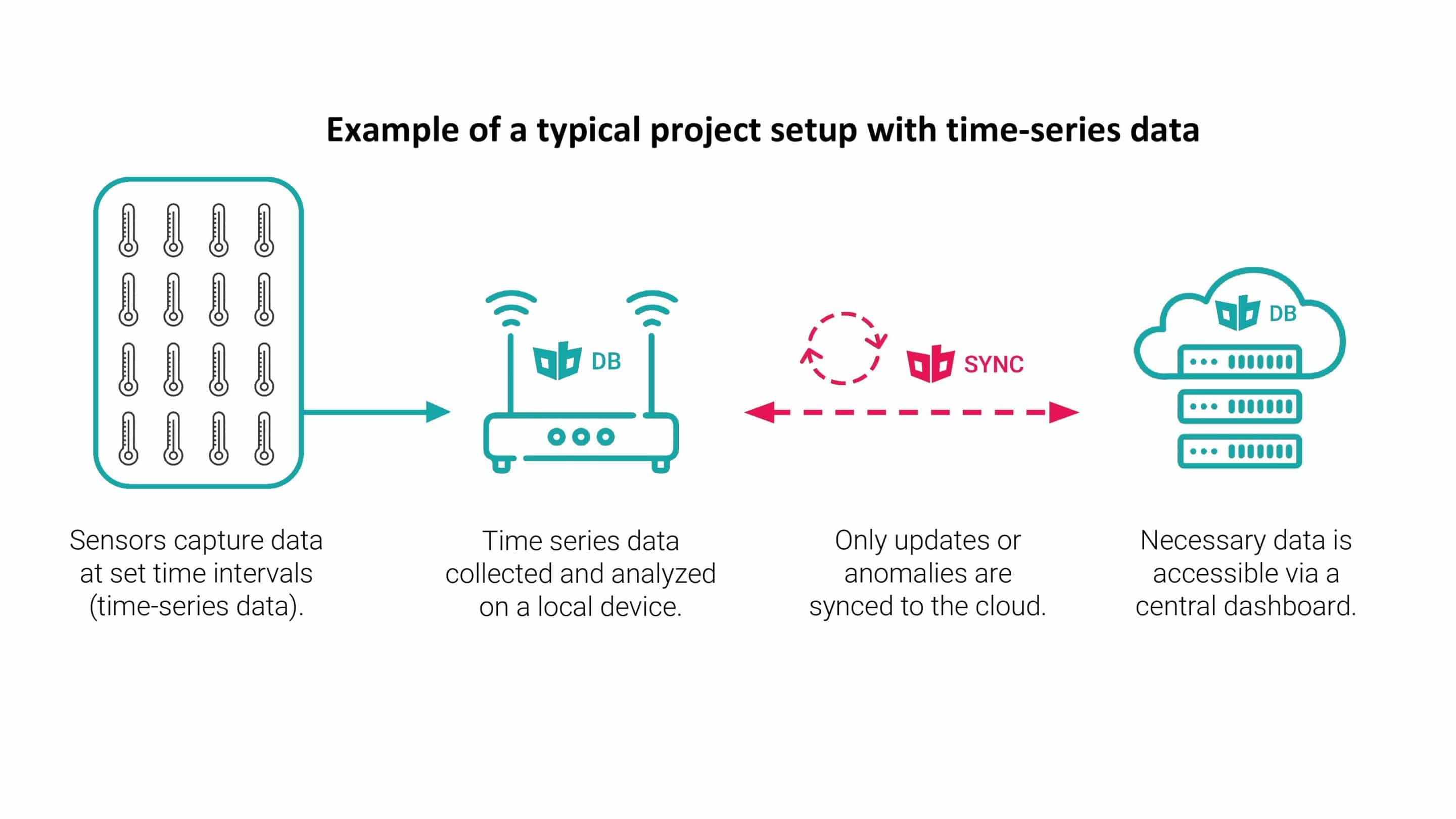

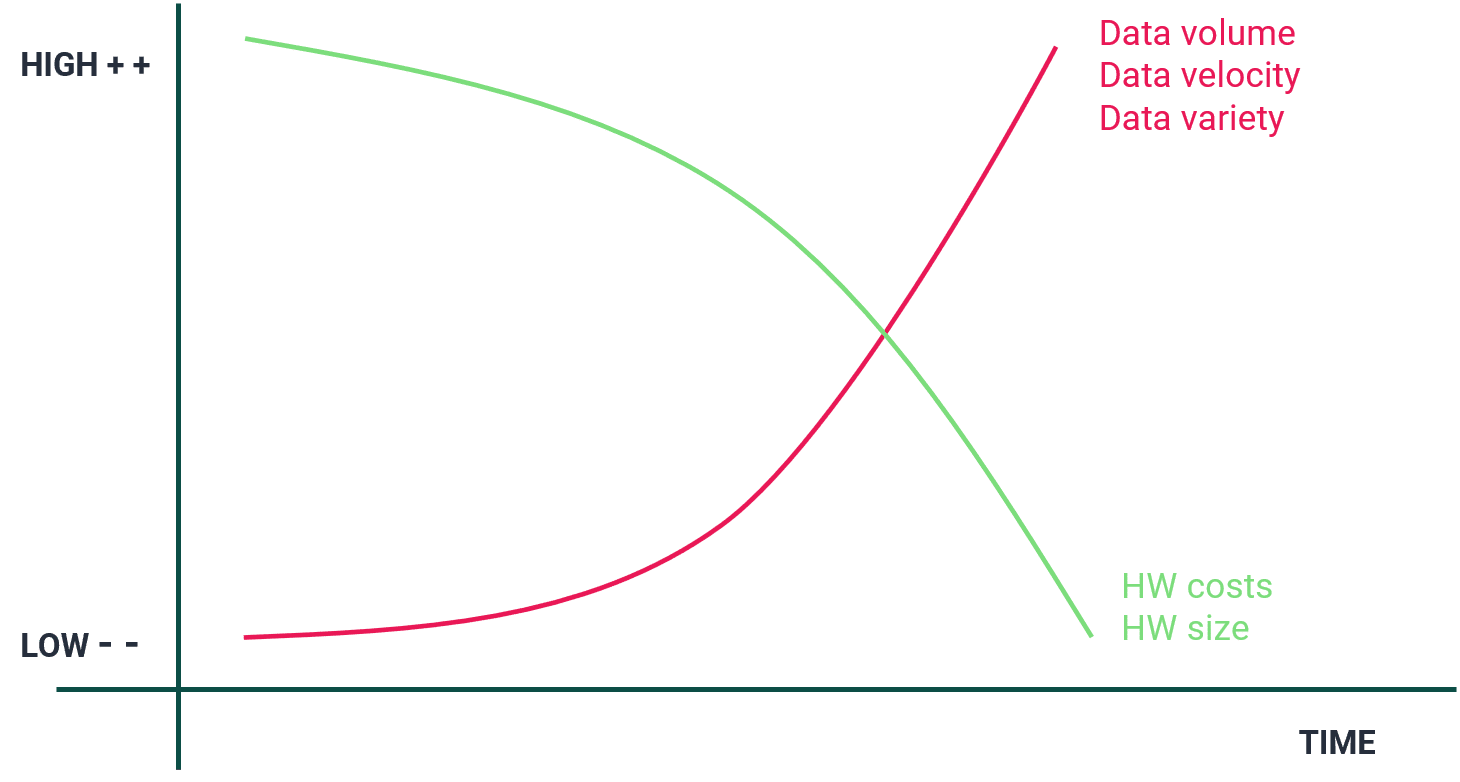

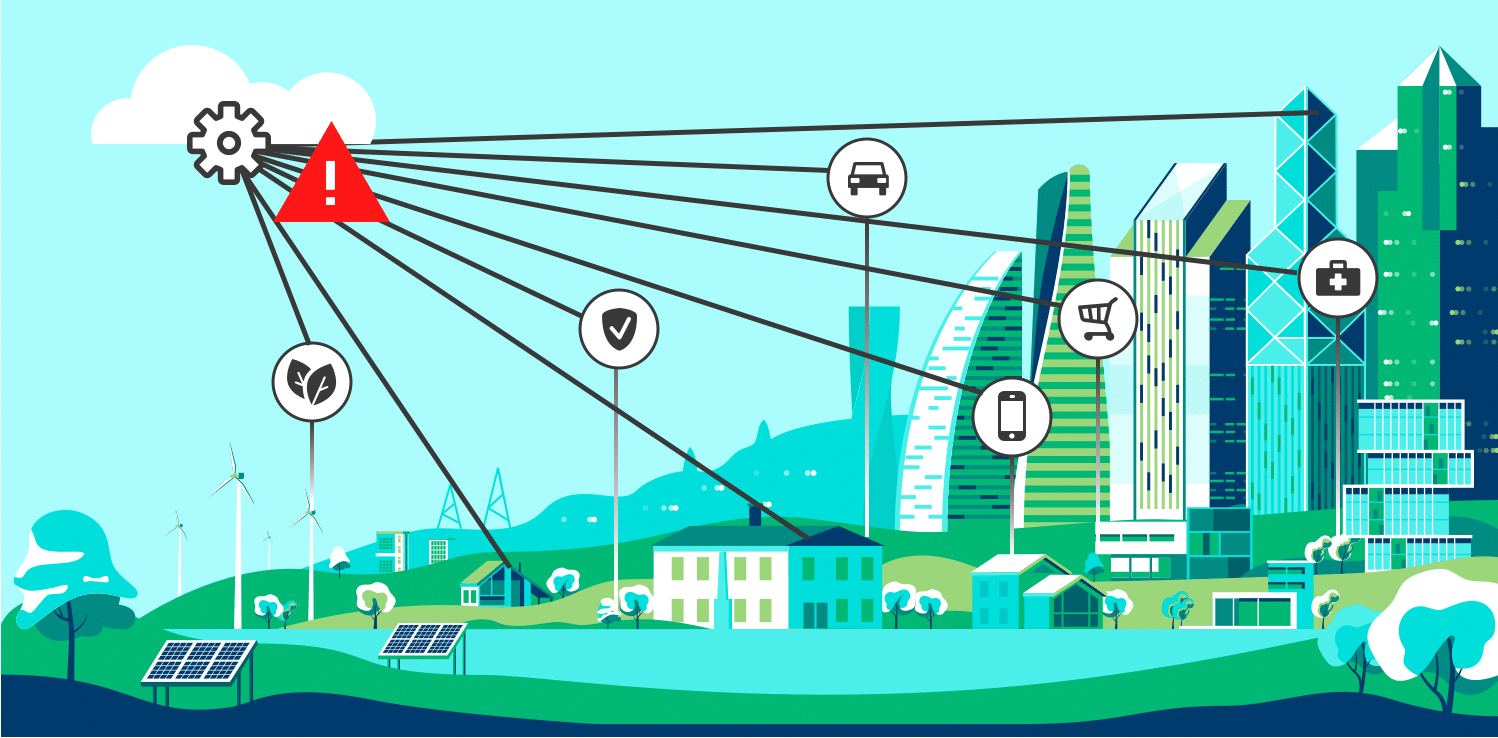

Edge Computing

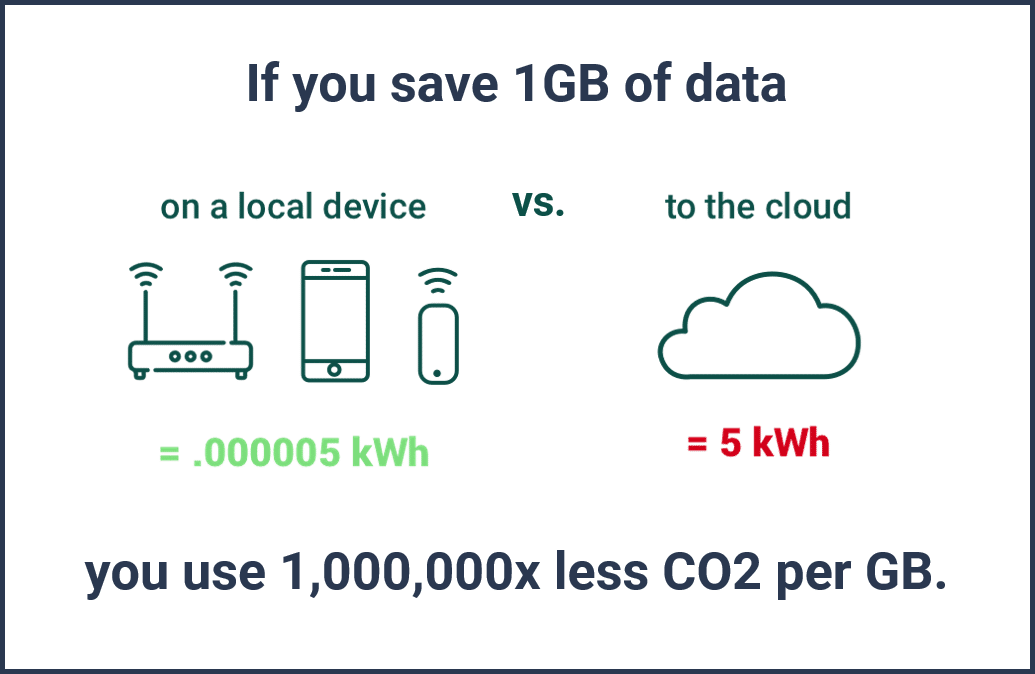

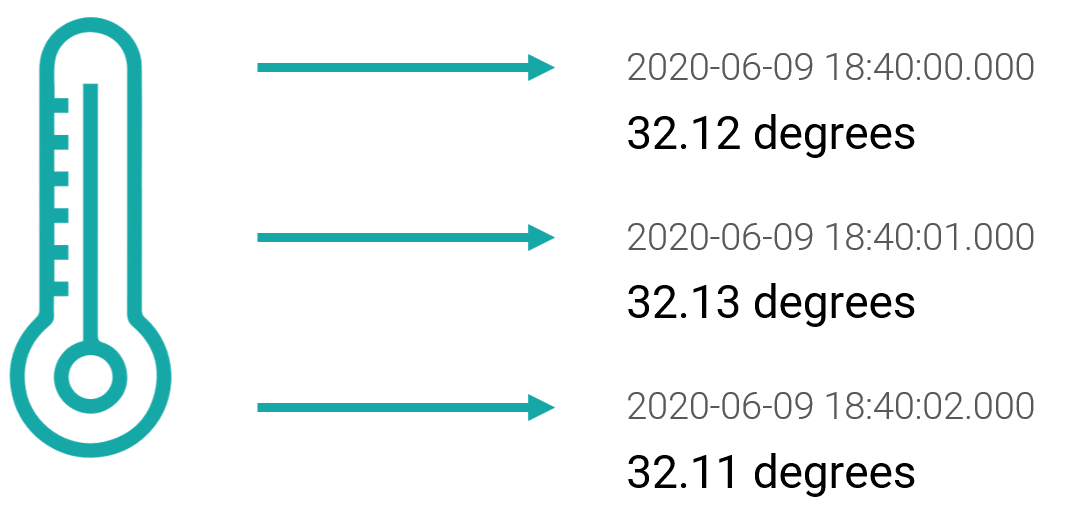

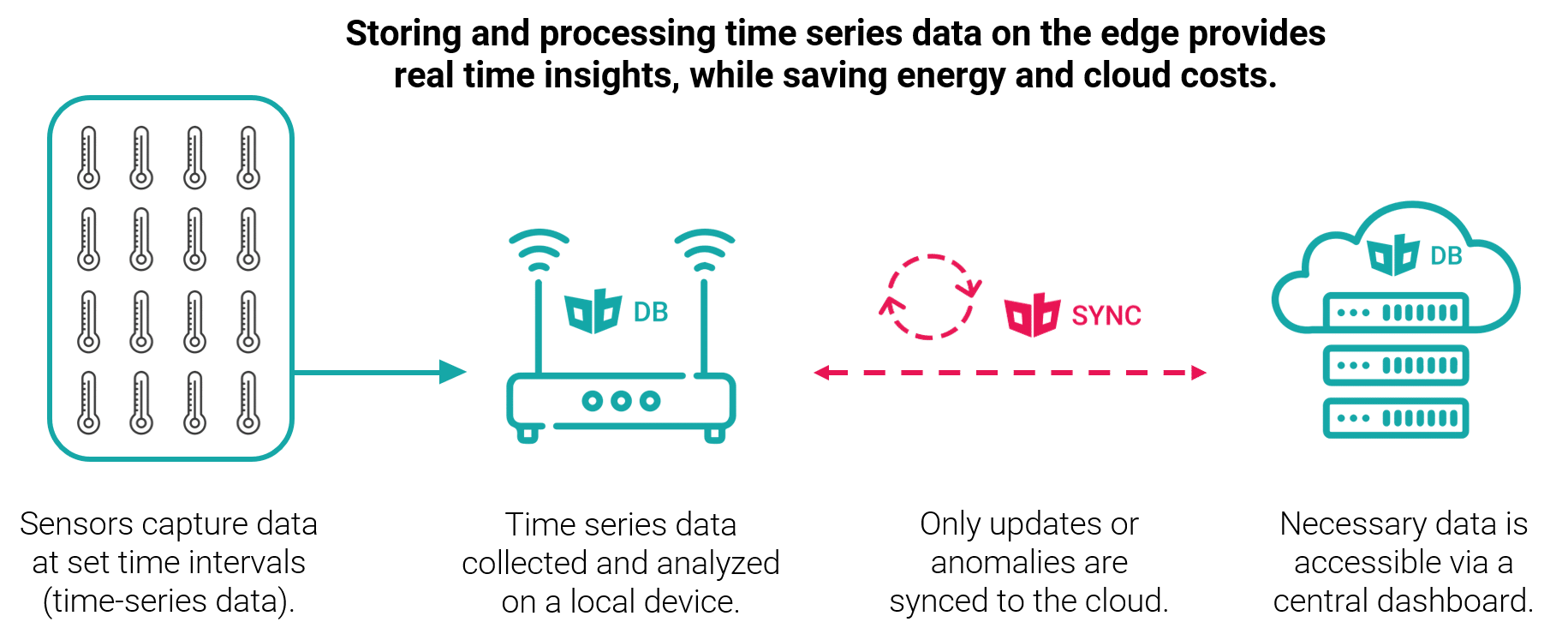

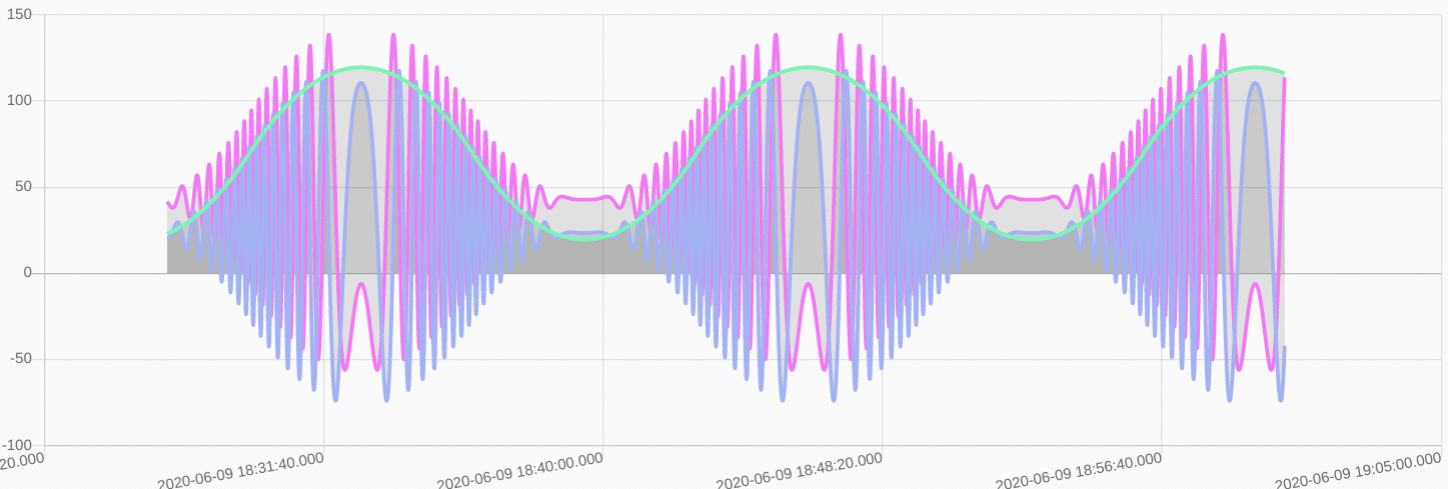

Edge computing is a technological megashift happening in computing. [23] Instead of pushing data to the cloud to be computed, processing is done locally, on ‘the edge’. [15] Edge Computing is one of the key technologies to make healthcare more connected, secure, and efficient. [22] Indeed, the digital healthcare ecosystem of the future depends on an infrastructure layer that makes health data accessible when needed where needed (data liquidity). [21] Accordingly, IDC expects the worldwide edge computing market to reach $250.6 billion in 2024 with a (12.5% CAGR) [24] with healthcare identified as one of the leading industries that will adopt edge computing. [16]

The healthcare market is in the middle of a fast digital transformation process. Drivers such as COVID, growing IoT adoption in healthcare, and underlying social mega-trends are pushing digital healthcare growth to new heights. Therefore, the digital healthcare industry faces many challenges, both technical and regulatory. At the same time the healthcare market is offered a wealth of opportunities.

References

[1] https://www.computerworld.com/article/3529427/how-iot-is-becoming-the-pulse-of-healthcare.html / https://www.gartner.com/en/documents/3970072

[2] https://www.accenture.com/us-en/insights/health/leaders-make-recent-digital-health-gains-last

[3] https://sifted.eu/articles/europes-healthtech-industry-2020/

[4] https://www.mobihealthnews.com/news/emea/health-tech-investments-will-continue-rise-2020-according-silicon-valley-bank

[5] https://news.crunchbase.com/news/for-health-tech-startups-data-is-their-lifeline-now-more-than-ever/

[6] https://www.accenture.com/us-en/insight-artificial-intelligence-healthcare%C2%A0

[7] https://www.grandviewresearch.com/industry-analysis/wearable-medical-devices-market

[8] https://www.marketsandmarkets.com/PressReleases/iot-healthcare.asp

[9] https://www.grandviewresearch.com/press-release/global-mhealth-app-market

[10] https://www.globenewswire.com/news-release/2020/05/23/2037920/0/en/Global-Digital-Health-Market-was-Valued-at-USD-111-4-billion-in-2019-and-is-Expected-to-Reach-USD-510-4-billion-by-2025-Observing-a-CAGR-of-29-0-during-2020-2025-VynZ-Research.html

[11] https://www2.stardust-testing.com/en/the-digital-transformation-trends-and-challenges-in-healthcare

[12] https://www.prnewswire.com/news-releases/healthcare-analytics-market-size-to-reach-usd-40-781-billion-by-2025–cagr-of-23-55—valuates-reports-301041851.html#:~:text=Healthcare%20Big%20Data%20Analytics%20Market,13.6%25%20during%202019%2D2025

[13] https://www.globenewswire.com/news-release/2020/11/25/2133473/0/en/Global-Digital-Health-Market-Report-2020-Market-is-Expected-to-Witness-a-37-1-Spike-in-Growth-in-2021-and-will-Continue-to-Grow-and-Reach-US-508-8-Billion-by-2027.html

[14] https://www.nasdaq.com/articles/iomt-meets-new-healthcare-needs%3A-3-medtech-trends-to-watch-2020-11-27

[15] https://go.forrester.com/blogs/predictions-2021-technology-diversity-drives-iot-growth/

[16] https://www.prnewswire.com/news-releases/state-of-the-edge-forecasts-edge-computing-infrastructure-marketworth-700-billion-by-2028-300969120.html

[17] https://news.crunchbase.com/news/for-health-tech-startups-data-is-their-lifeline-now-more-than-ever/

[18] https://www.gartner.com/en/newsroom/press-releases/2020-05-21-gartner-says-50-percent-of-us-healthcare-providers-will-invest-in-rpa-in-the-next-three-years

[19] https://www.idc.com/getdoc.jsp?containerId=prUS46794720

[20] https://www.mckinsey.com/industries/healthcare-systems-and-services/our-insights/the-great-acceleration-in-healthcare-six-trends-to-heed

[21] https://www.mckinsey.com/industries/healthcare-systems-and-services/our-insights/the-next-wave-of-healthcare-innovation-the-evolution-of-ecosystems

[22] https://www.cbinsights.com/research/internet-of-medical-things-5g-edge-computing-changing-healthcare/

[23] https://siliconangle.com/2020/12/08/future-state-edge-computing/

[24] https://www.idc.com/getdoc.jsp?containerId=prUS46878020

[25] https://www.mckinsey.com/industries/healthcare-systems-and-services/our-insights/telehealth-a-quarter-trillion-dollar-post-covid-19-reality

[26] https://go.forrester.com/blogs/will-virtual-care-stand-the-test-of-time-if-youre-asking-the-question-its-time-to-catch-up/

[27] https://www.computerworld.com/article/3529427/how-iot-is-becoming-the-pulse-of-healthcare.html

![Introducing: ObjectBox Generator, plus C++ API [Request for Feedback!]](https://objectbox.io/wordpress/wp-content/uploads/2020/06/ob-code-gen-1080x675.jpg)