What is Data Synchronization + How to Keep Data in Sync

What is Data Sync / Data Synchronization in app development?

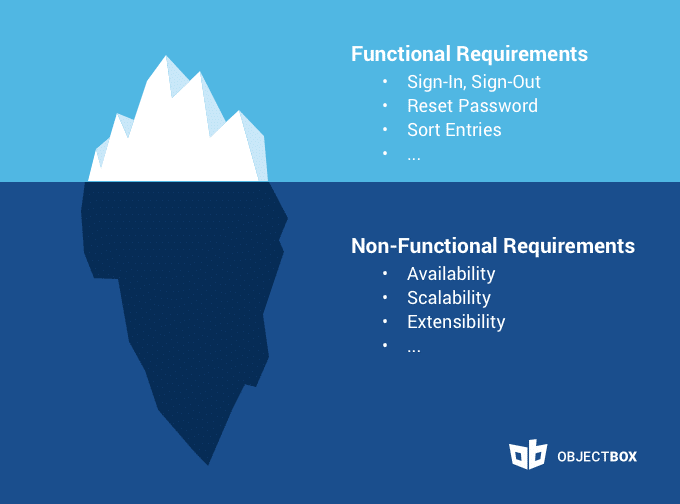

Data Synchronization (Sync) is the process of establishing consistency and consolidation of data between different devices, including offline data sync to ensure accessibility even without a constant internet connection. It is fundamental to most IT solutions, especially in IoT and Mobile. Data Sync entails the continuous harmonization of data over time and typically is a complex, non-trivial process. Even corporates struggle with its implementation and had to roll back Data Sync solutions due to technical challenges.

The question Data Sync answers is

How do you keep data sets from two (or more) data stores / databases – separated by space and time – mirrored with one another as closely as possible, in the most efficient way?

Data Sync challenges include asynchrony, conflicts, slow bandwidth, flaky networks, third-party applications, and file systems that have different semantics.

Data Sync versus Data Replication in Databases

Data replication is the process of storing the same data in several locations to prevent data loss and improve data availability and accessibility. Typically, data replication means that all data is fully mirrored / backed up / replicated on another instance (device/server). This way, all data is stored at least twice. Replication typically works in one direction only (unidirectional); there is no additional logic to it and no possibility of conflicts.

In contrast, Data Sync typically relates to a subset of the data (selection) and works in two directions (bi-directional). This adds a layer of complexity, because now conflicts can arise. Of course, if you select all data for synchronisation into one direction, it will yield the same result as replication. However, replication cannot replace synchronization.

Why do you need to keep data in sync?

Think about it – if clocks were not in sync, everyone would live on a different time. While I can see an upside to this, it would result in many inefficiencies as you could not rely on schedules. When business data is not in sync (up-to-date everywhere), it harms the efficiency of the organization due to:

- Isolated data silos

- Conflicting data / information states

- Duplicate data / double effort

- Outdated information states / incorrect data

In the end, the members of such an organization would not be able to communicate and collaborate efficiently with each other. They would instead be spending a lot of time on unnecessary work and “conflict resolution”. On top, management would miss an accurate overview and data-driven insights to prioritize and steer the company. The underlying mechanism that keeps data up-to-date across devices is a technical process called data synchronization (Sync), which often requires offline data sync capabilities to maintain consistency even when devices are offline. And while we expect these processes to “just work”, someone needs to implement and maintain them, which is a non-trivial task.

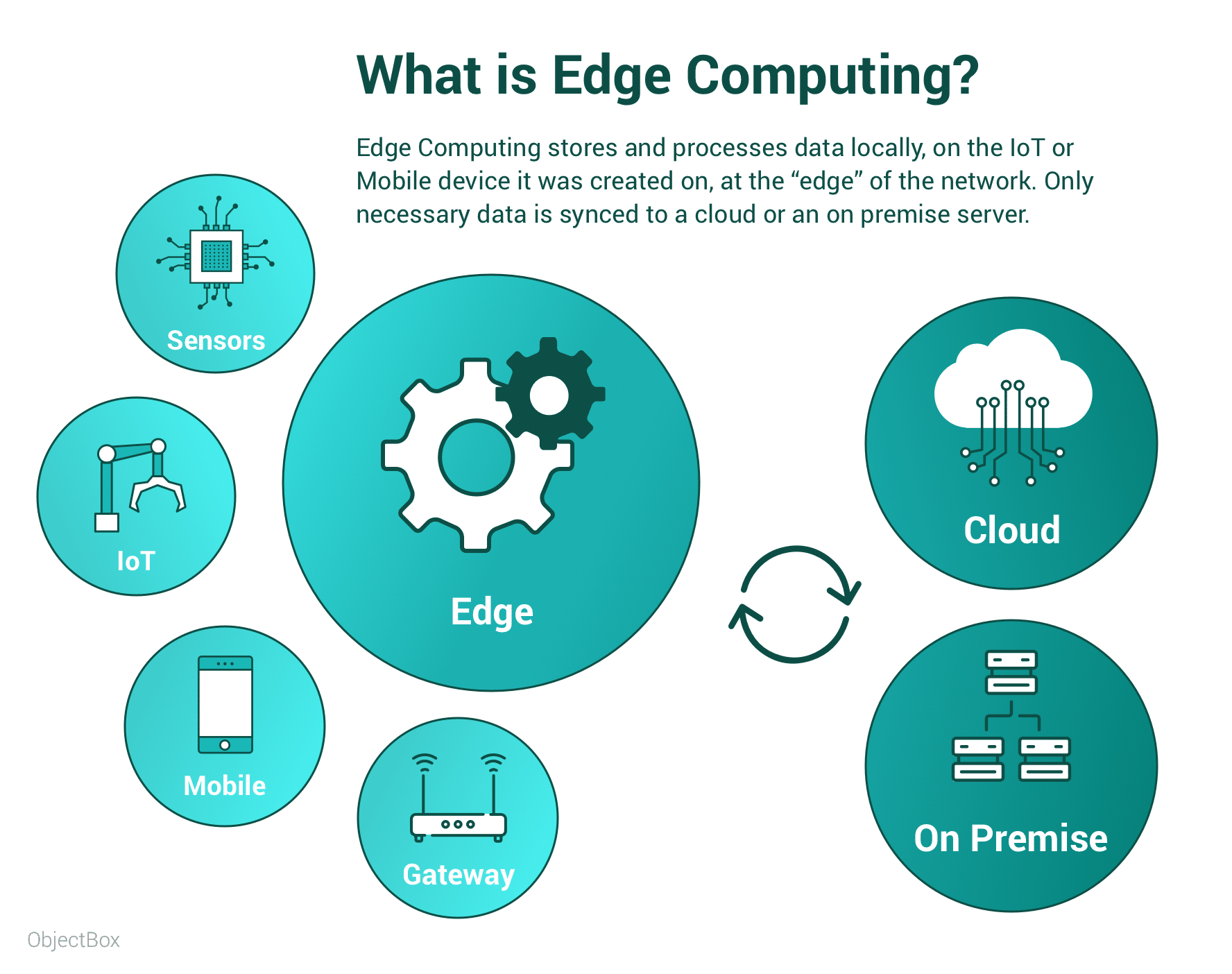

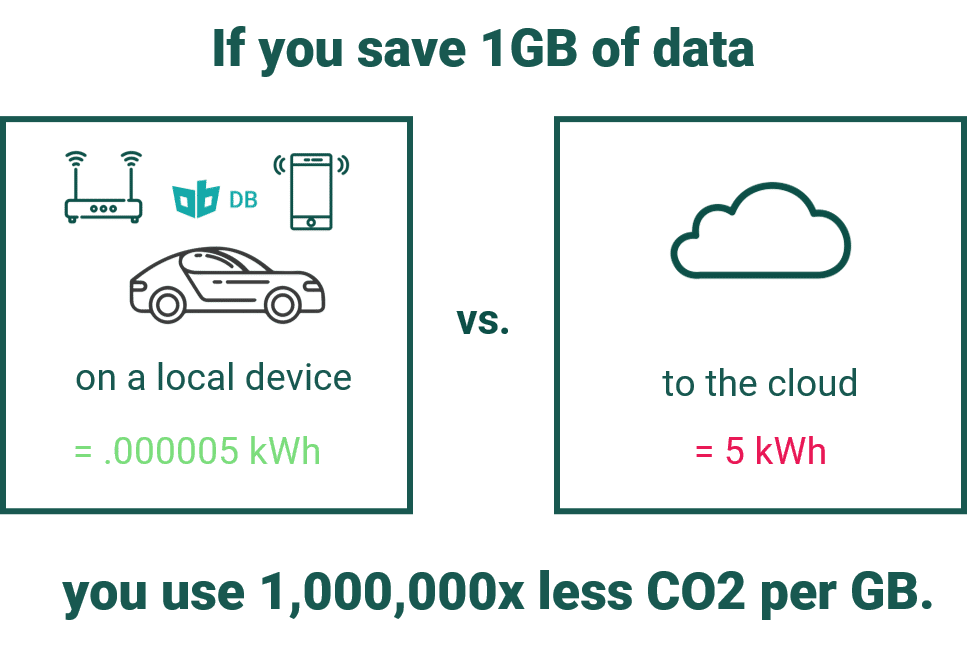

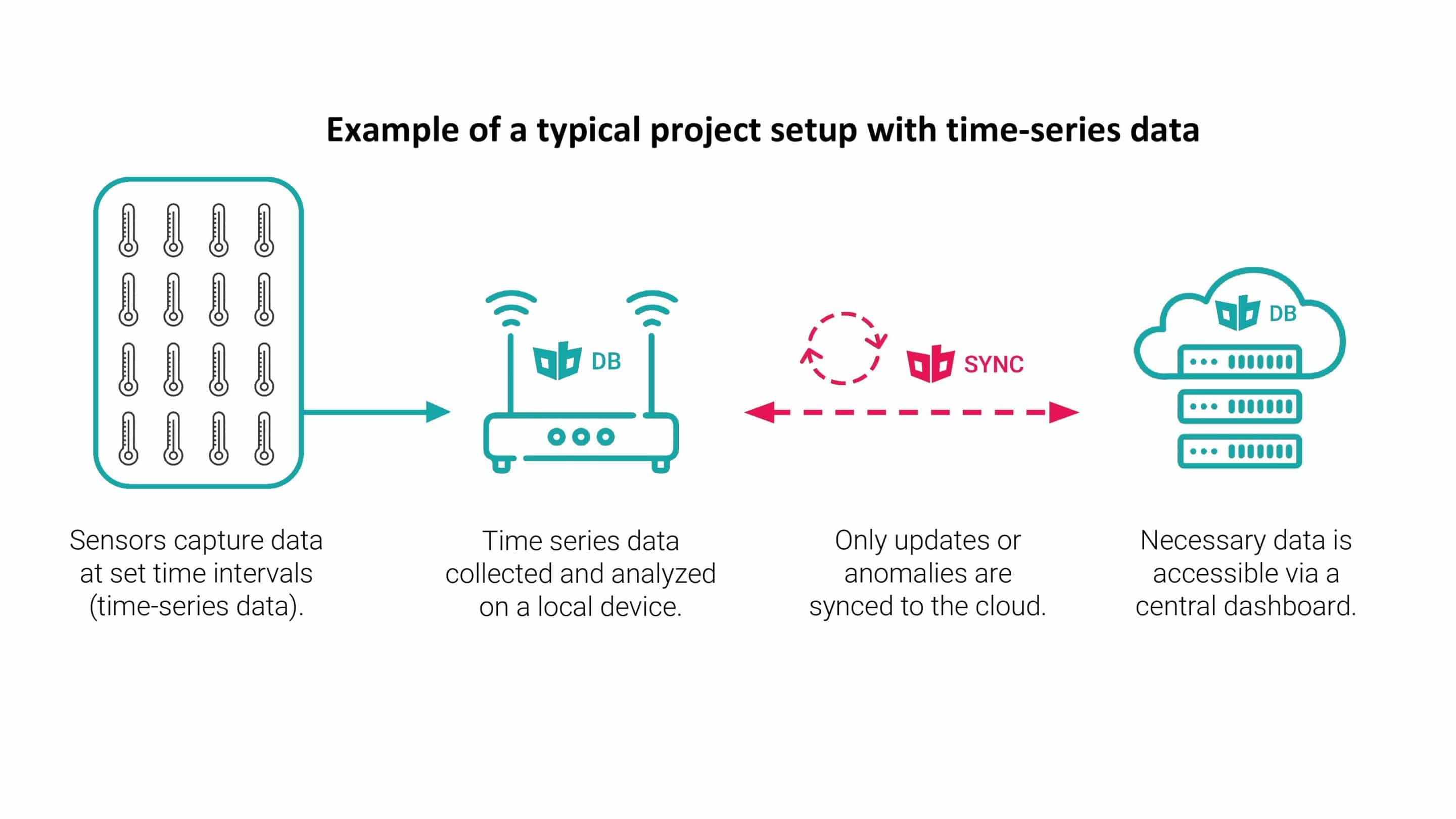

Growing data masses and shifts in data privacy requirements call for sensible usage of network bandwidth and the cloud. Edge computing with selective data synchronization is an effective way to manage which data is sent to the cloud, and which data stays on the device. Keeping data on the edge and synchronizing selective data sets effectively, reduces the data volume that is transferred via the network and stored in the cloud. Accordingly, this means lower mobile networking and cloud costs. On top, it also enables higher data security and data privacy, because it makes it easy to store personal and private data with the user. When data stays with the user, data ownership is clear too.

Unidirectional Data Replication

Bidirectional Data Synchronization

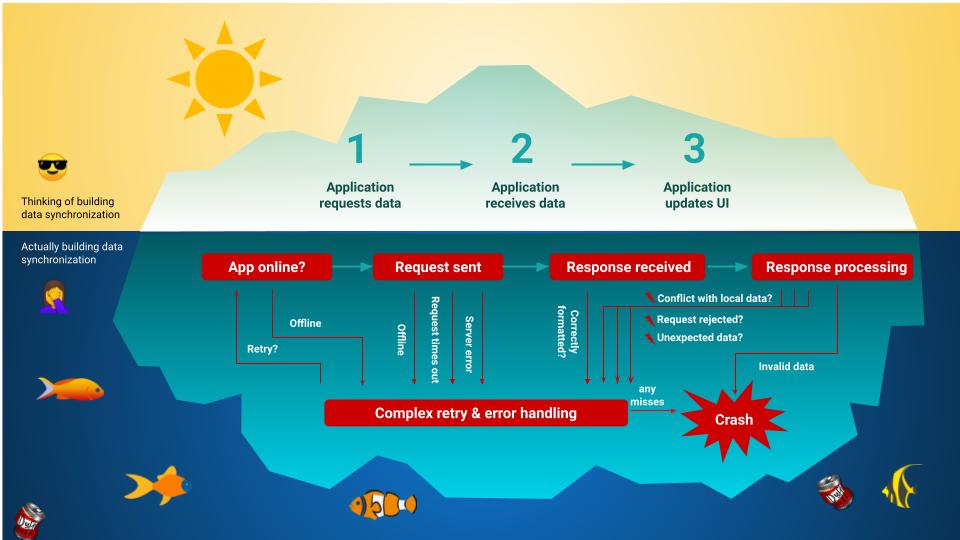

Out-of-the-box Sync magic: Syncing is hard

Almost every Mobile or IoT application needs to sync data, so every developer is aware of the basic concept and challenges. This is why many experienced developers appreciate out-of-the-box solutions. While JSON / REST offers a great concept to transfer data, there is more to Data Sync than what it looks like at a glance. Of course, the complexity of Sync varies widely depending on the use case. For example, the amount of data, data changes, synchronous / asynchronous sync, and number of devices (connections), and what kind of client-server or peer-to-peer setup is needed, all affect the complexity.

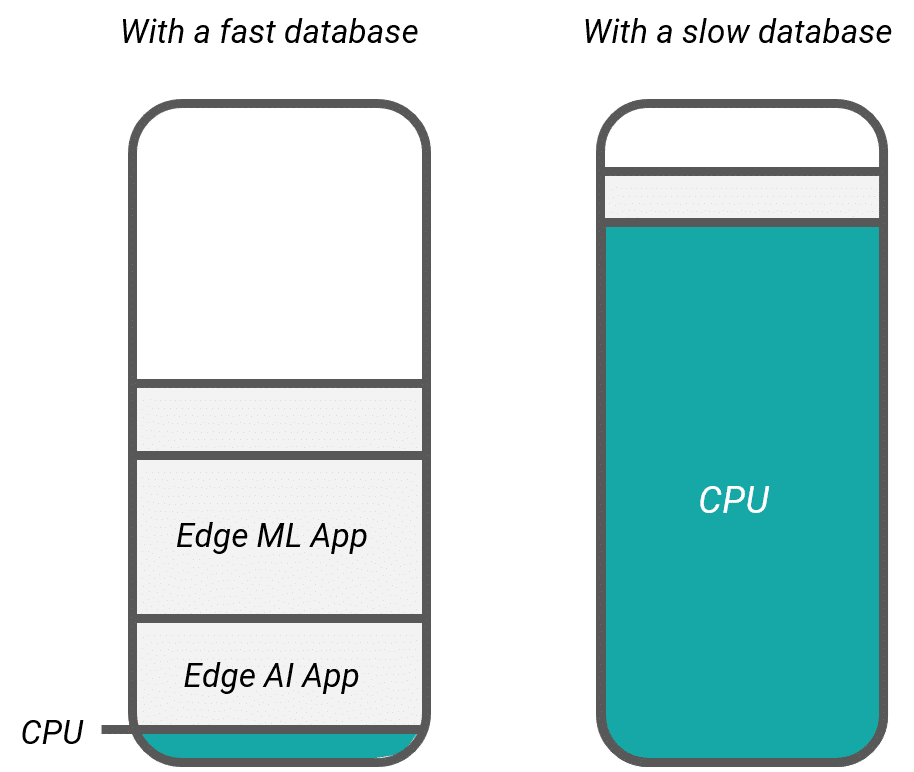

What looks easy in practice hides a complex bit of coding and opens a can of worms for testing. For an application to work seamlessly across devices – independent of the network, which can be offline, flaky, or only occasionally connected – an app developer must anticipate and handle a host of local and network failures to ensure data consistency. Offline sync capabilities help applications continue to function in these scenarios, ensuring reliable performance. Moreover, for devices with restricted memory, battery and/or CPU resources (i.e. Mobile and IoT devices), resource sensitivity is also essential. Data storage and synchronization solutions must be both effective / efficient, and sustainable.

How to Keep Data in Sync Without the Headache?

Thankfully, there are out-of-the-box data synchronization solutions available on the market, which solve data syncing for developers. They fall broadly into two categories: cloud-dependent data synchronization, and independent, “edge” data synchronization. Cloud-based solutions, like Firebase, require a connection to the internet to function. Data is sent to and requested from the cloud constantly. Edge solutions, like ObjectBox, also offer “Offline Sync”: Data is stored in an efficient on-device database, synchronization on and between edge devices can be done continually without an Internet connection, and Dat Sync with a cloud or a backend that is not located on premise occurs once the device(s) goes online. Below, we summarize the most popular market offerings for data synchronization (offline and cloud based):

Couchbase

Couchbase is a Cloud DB, Edge DB and Sync offering that requires the use of Couchbase servers.

Firebase

Firebase is a Backend as a Service (BaaS) offering from Google (acquired). Google offers it as a cloud hosted solution for mobile developers.

Mongo Realm

Realm was acquired by MongoDB in 2019; the Mongo Realm Sync solution (Atlas Device Sync) used Realm DB on edge devices and synchronized with a MongoDB hosted in the cloud. However, MongoDB recently announced end-of-life for it.

ObjectBox

ObjectBox is a DB for any device, from restricted edge devices to servers, and offers an out-of-the-box Sync solution with offline sync capabilities, enabling reliable data access even without an internet connection. ObjectBox enables self-hosting on-premise / in the cloud, as well as Offline Sync.

Parse

Parse is a BaaS offering that Facebook acquired and shut down. Facebook open sourced the code. The GitHub repository is not officially maintained. You can host Parse yourself or use a Parse hosting service.

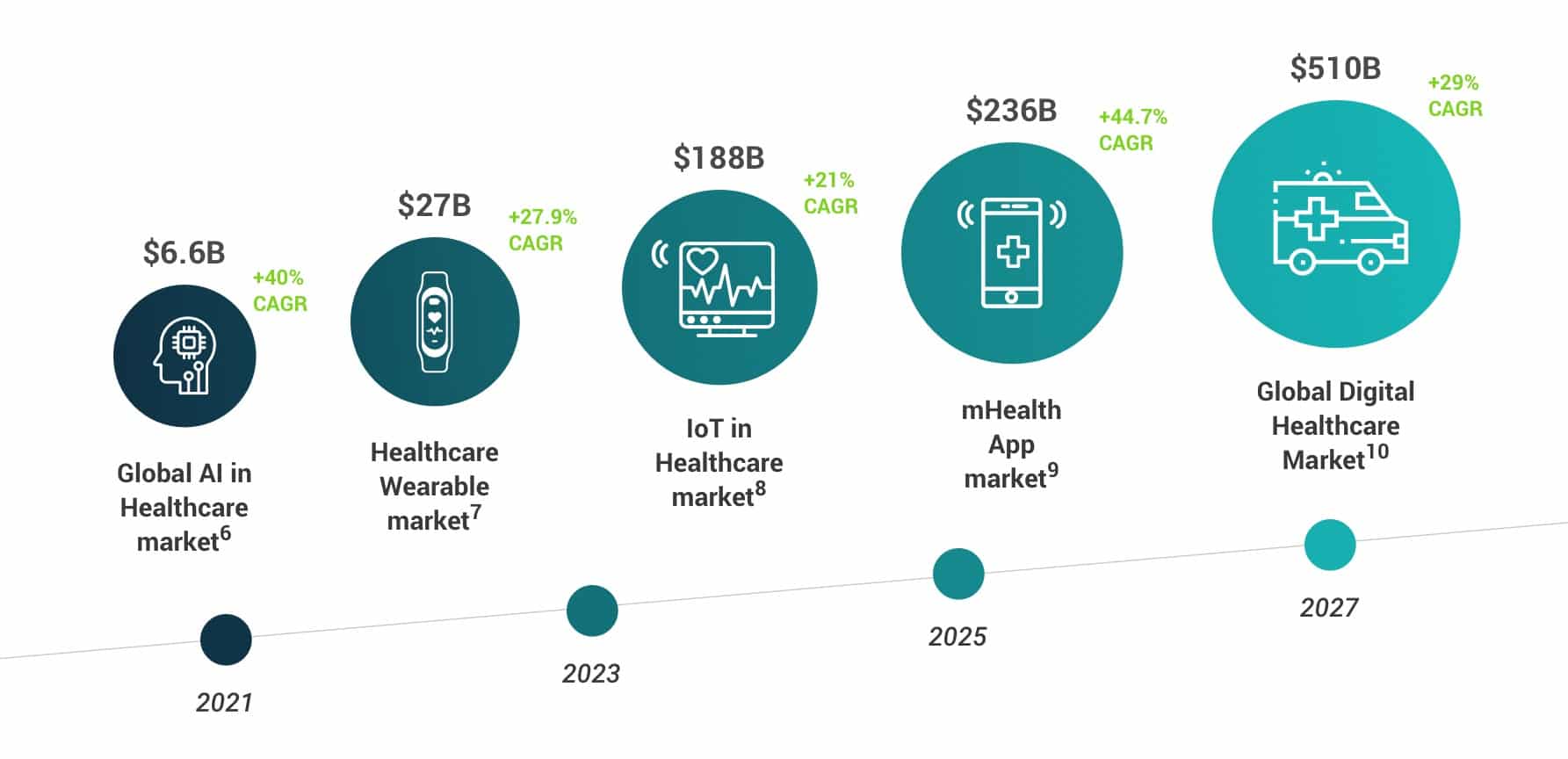

Data Sync, Edge Computing, and the Future of Data

There is a megashift happening in computing from centralized cloud computing to Edge Computing. Edge computing is a decentralized topology entailing storing and using data as close to the source of the data as possible, i.e. directly on edge devices. Accordingly, the market is growing rapidly with projections estimating continuing growth with a 34% CAGR for the next five years. The move from the cloud to the edge is strongly driven by new use cases and growing data masses. Edge data persistence and Data Sync (managing decentralized data flows), especially “Offline Sync”, are the key technologies needed for Edge Computing. Using edge data persistence, data can be stored and processed on the edge. This means application always work, independent from a network connection, offline. Faster response times can be guaranteed. With Offline Sync, data can be synchronized between several edge devices in any location independant from an Internet connection. Once a connection becomes available, selected data can be synchronized with a central server. By exchanging less data with the cloud or a central instance, data synchronization reduces the burden on the network. This brings down mobile network and cloud costs, and reduces the amount of energy used: a win-win-win solution. It also enables data privacy by design.